En/3.5/High Availability

High Availability in Zentyal

High Availability (HA) is a term that refers to the continuous availability of services and resources in the wake of hardware, network or system failures. With Zentyal Server 3.4 community edition, we introduce High Availability for UTM services.

Corosync (1) engine is used to build and manage the clusters. A cluster consists of several Zentyal servers, permanently connected, which share a common synchronized configuration for all the modules that support HA.

The HA enabled modules for the current version are:

- DHCP

- DNS

- Firewall

- IPS

- Objects and services

- HTTP Proxy

- Certification Authority

- VPN (OpenVPN)

This replication allows you to instantly redirect the traffic from one node to another, in a transparent way and without downtime in the event of a node failure.

Apart from the cluster management, there is also resource allocation, the resources are unique entities that will be assigned to a specific node. The allocation of this resources is performed by PaceMaker (2).

The Available resources in this version are:

- Floating IP address

- DHCP Server

It is useful to assign the DHCP active server as a unique resource, given that having more than one DHCP server on the same LAN

segment is problematic in most scenarios.

The floating IP is a critical tool for the HA operation. If you point the network clients (using DHCP) about the default network resources in your infrastructure (NTP, Gateway, DNS...), using the floating IP Pacemaker can swap the active node without any interruptions for the user. You can perceive this floating IP as the abstract IP for the whole cluster.

Creating the Cluster and adding nodes

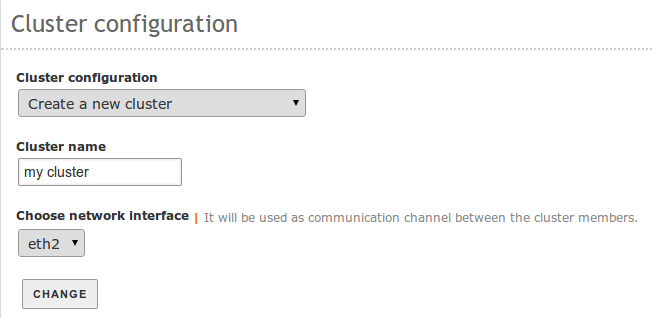

To initialize the new cluster, you will access System ‣ High Availability in the first node and you will see the following form:

In this first node you will Create a new cluster assign a name, and choose the network interface you are going to use to reach the other nodes of the cluster.

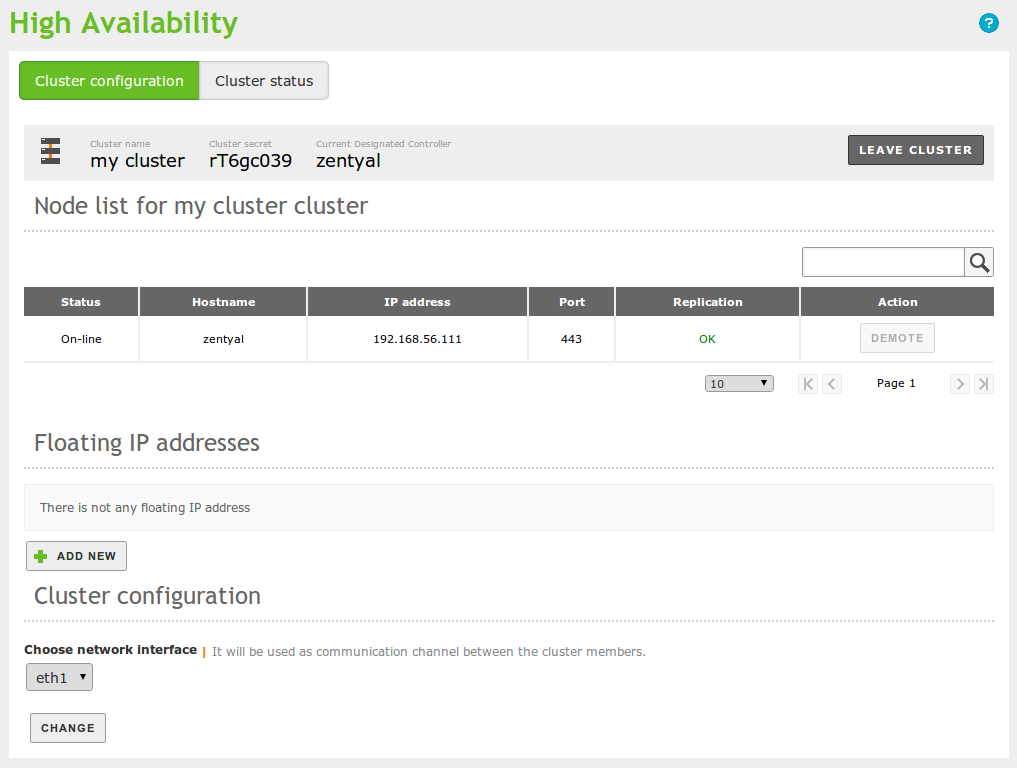

Once the cluster is configured and the module is enabled, accessing the same interface section, you will be presented with the following interface:

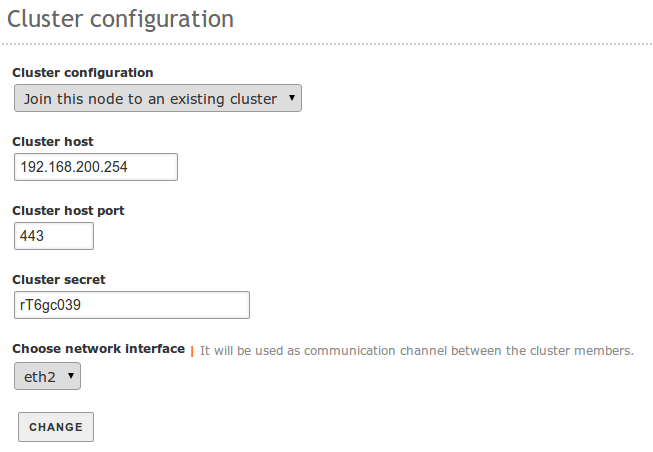

The next step, to be able to use any functionality of this module, is to add additional nodes to the cluster. From each one of the N Zentyal Servers that you want to add to the cluster, you will access System ‣ High Availability and fill this form:

You will have to specify the IP of any of the other nodes, the link interface and the cluster secret that you can read in the general configuration of the first node.

It is important that the nodes of the cluster can access each other without any firewall restriction. Please make sure that you have an object containing the IP addresses of all the nodes and you allow any access from this object.

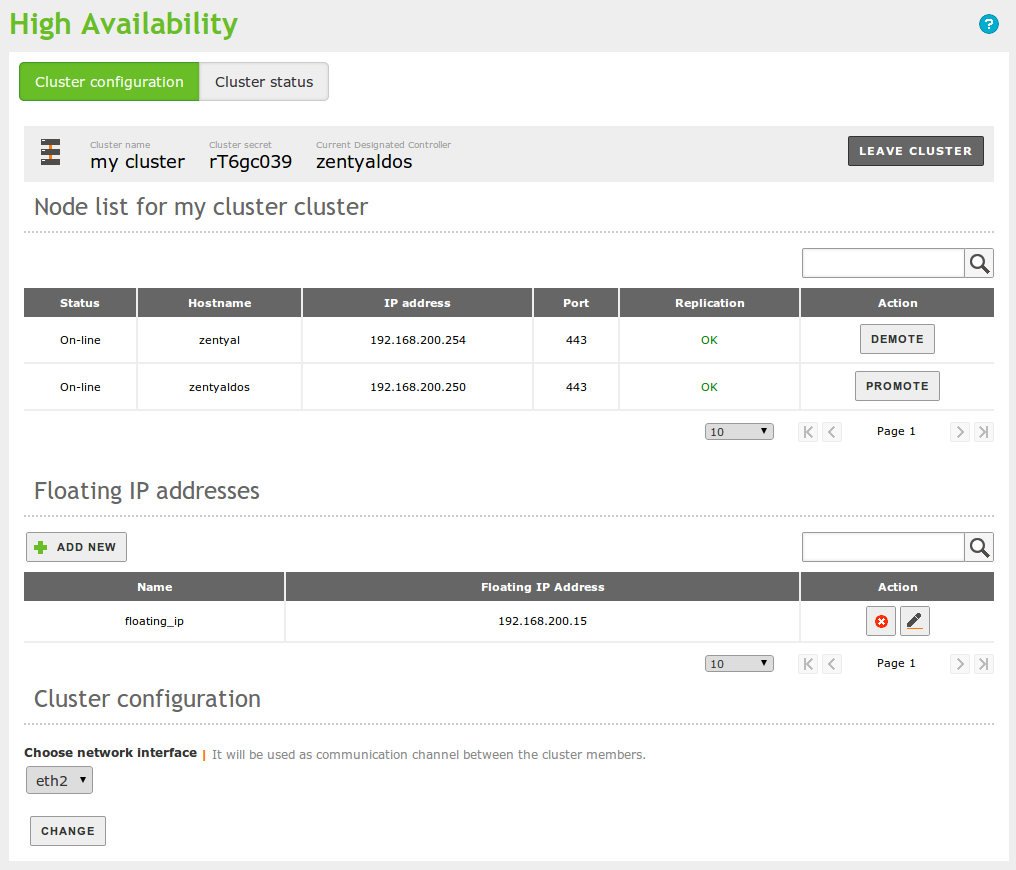

Once you have two or more nodes, you can start managing the different features of the cluster:

In the top part, you can check which node is the Designated Controller, this is, the node that will perform the cluster operations. You just need this information to know which node will containing the full operations log, it is not related with resource allocation.

Further down, you can see the list of active nodes. You can Promote the inactive nodes or Demote the active node. The active node is the one containing the resources, from this interface you can manually swap the active node. Of course, if there is a failure event in this node, the swap will be performed automatically.

In the bottom part of the interface, you can define the Floating IP addresses that will be assigned to the active node. If the server is acting as the gateway for several LAN segments, you will need a floating IP per segment, so you can totally replace the malfunctioning node.

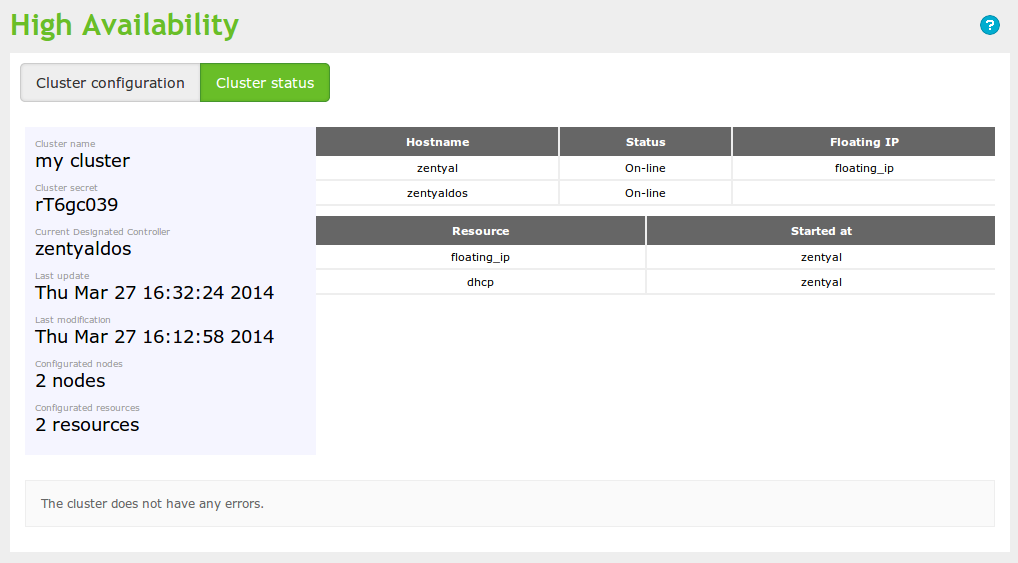

From the Cluster status tab, you can see the current resource allocation, the state of the nodes, time since the last changes and some other data:

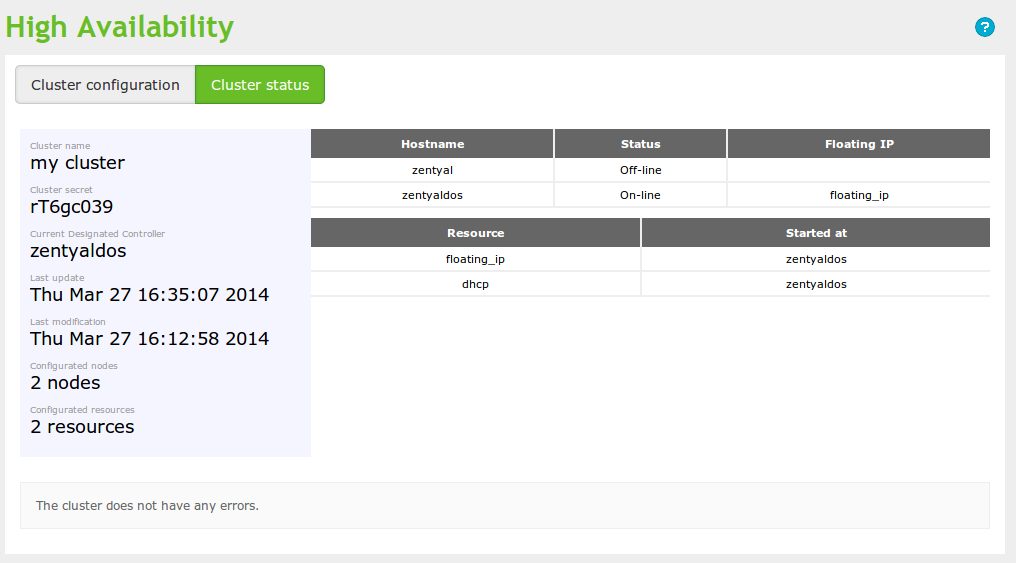

If you now simulate the active node failure, you will be able to see the following changes in the status:

You can see in the image above that the former active node is marked as Off-line and the resources have been assigned automatically to the former passive node.

Given this example configuration, you can, for instance, assign the DNS server using DHCP to the floating IP. Since all your nodes have exactly the same DNS information, you can transparently swap nodes if necessary.